Working on defining a way to model “data transfer” between leaf actions of BTree

For BTree on component level there is no questions.

But for BTree in “atomic” function level I have

Capella does not have a goal to model behaviour of atomic functions.

If you we want to model intrinsic behaviour of atomic functions we need additional elements.

In Capella there is three levels of modeling

- Functions, and Functional Exchanges

- Components, and Component Exchanhes

- Physical Nodes, and Physical links

Functions hides details of their implementation. Capella Data flow diagrams in fact does not show separate data flows explicitly. They show functional exchanges. That can contain separate input and output data flows.

If we want to model behaviour of atomic functions and data flows in detail between them we need one more level of modeling

- FncBlocks and Data Exchange

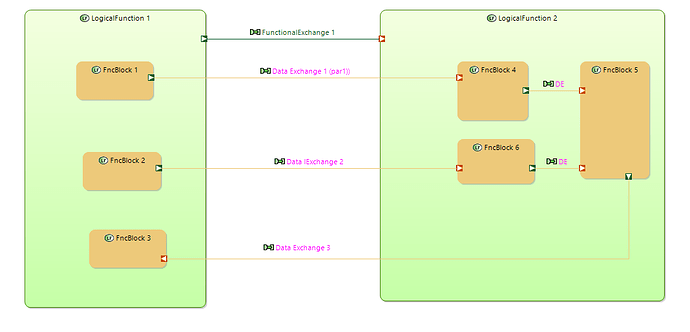

On the diagram bellow FncBlocks are in orange. And data flows between FncBlocks are modeled explicitely.

FncBlocks can be used to model implementation details of atomic function. FncBlocks can be decomposed to sub FncBlocks in the same manner as functions.

FncBlocks can be modeled separately from functions and “allocated” to functions in some stage.

On this stage FncBlocks are “packed” to Functions the same way as functions are “packed” to components. Another way to model implementation behaviour inside functions.

Functional exchanges hides data transfers. They can contains exchange item elements (in and out).

They are more like “function calls” with input parametes and out parametes. As a result data value transfer are modeled implicitly.

Data Exchanges can be used to model explicitly data value transfer between FncBlocks.

Several Data Exchanges can be allocated to one Funcional Exchange.

“Data ports” can be used to model each input\outpup parameters used in Funcional Block.

“Data exchanges” can be used to show explicitlly data transfers betwee functional blocks.

Before I tried to model internal behaviour of functions using sub-functions, data flows between sub-functions using functional exchnages, data ports using functional ports. But now I think that a separate level is needed. In this way new functionality will be compatible with “a Capella way”. Atoomic functions remains atomic. But if you need to model function in detail you can use aditional meta model and notation.

If BTree is defined for atomic function them Functional Exchange is used to “tick” behaviour tree of called function and used to provide input parameters for BTree. It also receives output result of tick (SUCESS, FAILURE, RUNNING) and output parametes of BTree.